A.K.A. The Serendipitous Nature of Debugging ORA- Errors

There is usually an Exadata angle to my posts, and this one won’t disappoint. It started out as I was debugging an “ORA-39777: data saves are not allowed when loading lob columns” error.

The Original Problem

Using SQL*Loader, several of my customers were suddenly seeing ORA-39777 (data saves are not allowed when loading lob columns) on a couple of tables. This was strange since none of our standard released tables had a CLOB column; the data model has not changed for at least a few months.

At first I thought that perhaps the Oracle client version, and as a result the SQL*Loader version, was changed; however, when I tried reproducing the error with every combination of the Oracle client from 12.1.0.1 through 19c (19.4) against database versions 12.1.0.2 through 19c; every test case I tried worked fine. At that point, I was thinking it could be data related or a different client patch set. Until I could get more details on that, I decided to research a different aspect of the error, so the next step was to look and see what might be in Oracle Support.

Searching Oracle Support

The only reference I found to ORA-37777 was this bug:

Bug 20254971 : ORA-39777 AT DIRECT PATH LOADING OF CLOB DATA USING SQLLOADER

It was an old bug going back to 11.2.0.4, and there is still no patch, but the bug is supposed to be fixed in 19.1. But one part of the mystery was solved — note the “DIRECT PATH LOADING” part of the bug description. I had recently recommended changing their SQL*Loader control files to use direct path loads since there is no downside and all upside for that change in their environment. Still, the bug references two INFILE clauses in the control file, and their control files always had just one. The other question remained — where is the CLOB?

MAX_STRING_SIZE

The control files that hit the error all referenced tables with “wide” VARCHAR2 columns such as VARCHAR2(5000) and VARCHAR2(10000). The parameter MAX_STRING_SIZE, available since Oracle Database 12c Release 1, when set to EXTENDED, allows for VARCHAR2 columns up to 32767. Our released code now requires MAX_STRING_SIZE set to EXTENDED. But it’s still not a CLOB, right? When I do a DESCRIBE on a table with a wide VARCHAR2, it sure looks like a VARCHAR2:

SQL> describe testwide

Name Null? Type

---------------------- -------- --------------------

ID NUMBER

TM DATE

BIGSTR VARCHAR2(5000) Things Are Not Always As They Seem

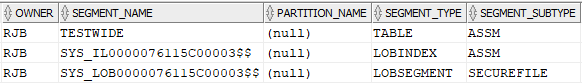

When I created the sample table TESTWIDE I decided to look a little deeper. Looking in DBA_SEGMENTS, I was somewhat surprised to see this:

In other words, under the covers, any VARCHAR2 column wider than 4000 is implemented as a LOB.

Can I Use Sleight of Hand to Work Around This?

As it turns out, there is a way to make wide VARCHAR2 columns behave more like “real” VARCHAR2 columns and not CLOBs, prevent them from triggering error messages, and keep them in line (pun intended). Create the table as VARCHAR2(4000), then ALTER the column to be the desired width:

SQL> create table testwide

2 (

3 id number,

4 tm date default sysdate,

5 bigstr varchar2(4000)

6 ) segment creation immediate;

Table created.

SQL> alter table testwide modify bigstr varchar2(5000);

Table altered.

SQL> All of the columns now appear to reside in a single segment, with the VARCHAR2 column in-line.

Performance Implications

What are the practical implications? Perhaps having all of the extended VARCHAR2 columns in-line will avoid the ORA- error, but otherwise, there could be some performance differences. The performance could get worse — if you are only searching on or using the ID or TM columns, you would avoid any I/O on the BIGSTR column if it were stored in a LOB segment. But from an operational perspective, you would think that an in-line extended VARCHAR2 column would behave more like a VARCHAR2 than a CLOB. And finally, what if you had a column defined as VARCHAR2(32767)? At worst, a fully-populated VARCHAR2(32767) column would end up in a chained row. A table with many fully-populated VARCHAR2(5000) columns would leave a lot of empty space in each block for a database with an 8K block size.

What Happens on Exadata?

There are potential performance implications on Oracle Exadata as well, some not so obvious at first.

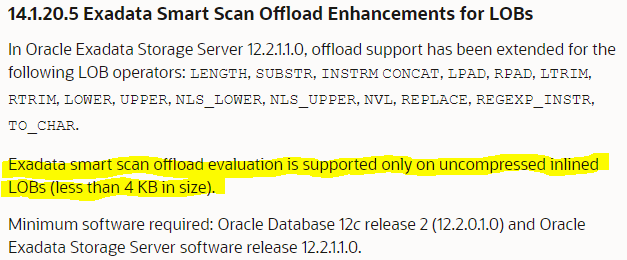

On Exadata, I wanted to see how cell server offloading would work, if at all, with CLOBs versus “inline” wide VARCHAR2s. The documentation for the latest version of Exadata cell server software (19.x as of this writing) gave me a clue as to what would be in store for me:

There is no mention of any other LOB-related enhancements beyond Exadata Storage Software version 12.2.x.

Test Case Setup

For the test, I first created the table with the column BIGSTR as VARCHAR2(5000), populated it with 2M rows, filling the VARCHAR2 column completely with a different repeated character in each row. then ran a SELECT statement with a somewhat selective WHERE clause to see if predicate offloading was being leveraged:

select /*+ parallel(4) monitor */ id,tm,substr(bigstr,1,20)

from testwide

where instr(bigstr,'$$$') > 0;For the second run, I recreated the table with the column BIGSTR as VARCHAR2(4000) then immediately extended the column to VARCHAR2(5000) and populated it with the same 2M rows.

Performance Results

The performance was somewhat what I expected. With the out-of-line CLOB masquerading as a VARCHAR2, retrieving the entire result set took over 2 minutes and drove about 50 GB of buffer cache activity, and no apparent offloading operations:

When the VARCHAR2(5000) was in-line, the predicate was offloaded with about 1/3rd of the buffer cache activity and finished in under 30 seconds:

It’s a good day when you see Cell Offload Efficiency at 99%.

In other words, when the VARCHAR2 was out-of-line, all rows from the SELECT were returned to the compute layer and filtered there. The cell-level session statistics comparison showed the same thing:

Lessons Learned

When is a VARCHAR2 not really a VARCHAR2, even though the Oracle data dictionary says it is? When it’s a VARCHAR2 with more than 4000 characters and MAX_STRING_SIZE is set to EXTENDED. It looks much more like an out-of-line CLOB from a segment perspective.

As a general rule, make sure you’re monitoring your Exadata storage layer predicate offloading performance. There are very few single-row functions and column types that can’t be offloaded, and when you run into those use cases, there’s often an easy fix such as the one I found here.

What’s Next?

There are many more things to investigate. What if I used HCC on the table? What about indexes, compressed or not? Am I going to waste a lot of disk space or end up with chained rows when a given row has a VARCHAR2(5000) that’s almost always at the maximum size? How can storage indexes be leveraged so I don’t need to do as much I/O at the storage layer?

Epilogue

You may have noticed the caption to one of the images: CLOBCHAR2. Yes, I just made up that term, and perhaps it will catch on given that the instantiation of a wide VARCHAR2 is implemented as a CLOB. You heard it here first.